Google is undertaking a significant internal realignment concerning its ambitious AI agent development, specifically impacting the team behind Project Mariner, an initiative focused on creating an AI agent capable of navigating the Chrome browser and executing tasks on behalf of users. WIRED has learned through sources close to the matter that several members of the Google Labs staff instrumental in developing this research prototype have been reassigned to projects deemed of higher strategic priority within the company.

A spokesperson for Google has confirmed these personnel shifts, while also asserting that the core technological advancements and capabilities cultivated under Project Mariner are not being abandoned. Instead, these sophisticated computer-use functionalities are slated for integration into Google’s overarching agent strategy. This integration is already underway, with some of Project Mariner’s innovations being folded into existing and upcoming agent products. Notably, the recently launched Gemini Agent, powered by the advanced reasoning capabilities of Gemini 3, has incorporated insights derived from Project Mariner, signaling a strategic pivot towards a more unified agent ecosystem.

This strategic adjustment by Google occurs amidst an intense and accelerating race among major AI laboratories to develop and deploy highly capable AI agents. The landscape is rapidly evolving, spurred by the emergence of sophisticated tools like OpenClaw, which, though currently primarily utilized by developers, is widely anticipated to evolve into the foundation for general-purpose AI assistants for both individual consumers and businesses. The significance of these emerging agentic systems was underscored by Nvidia CEO Jensen Huang, who, at the company’s recent developer conference, likened the burgeoning agent technology to a new operating system for computing. "Every company in the world today needs to have an OpenClaw strategy," Huang stated, emphasizing the transformative potential of these systems.

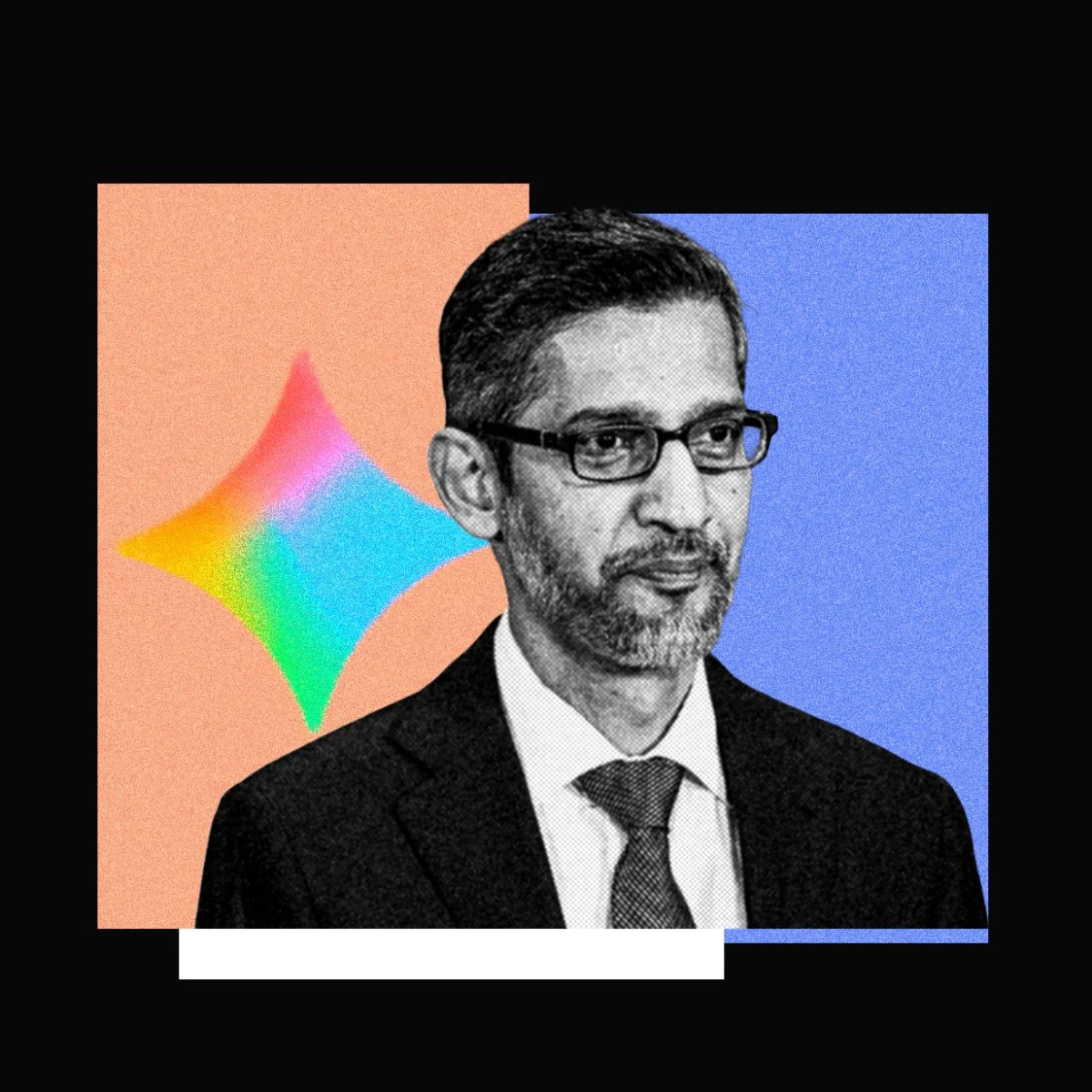

The impetus for Google’s internal recalibration can be traced back to the initial excitement surrounding browser agents. Google CEO Sundar Pichai notably showcased Project Mariner at last year’s I/O conference, positioning browser-based AI agents as the industry’s next significant frontier. At that time, competitors like OpenAI and Perplexity were also introducing consumer-facing agents designed to automate online tasks, promising capabilities such as clicking, scrolling, and form-filling on web pages, mimicking human interaction. However, the widespread adoption of these browser agents has, thus far, fallen short of initial ambitious projections.

Data from late 2025 illustrates this trend: Perplexity’s Comet browser agent reported approximately 2.8 million weekly active users in December 2025. Concurrently, OpenAI’s ChatGPT Agent experienced a decline, reportedly falling to fewer than 1 million weekly active users in recent months. When juxtaposed with the hundreds of millions of users engaging with ChatGPT on a weekly basis, the user base for these dedicated browser agents represents a statistically minor fraction. This discrepancy has prompted a re-evaluation of the most effective pathways for AI agent development and deployment.

The Shifting Sands of Agent Technology: From Browsers to Command Lines

The AI sector has witnessed a dramatic shift in momentum over the past year, with a pronounced focus moving towards agents that operate via command-line interfaces, such as Claude Code and OpenClaw. The creator of OpenClaw was reportedly recruited by OpenAI, further highlighting the talent migration and strategic importance of this area. These command-line-centric agents offer a more robust and reliable method for task completion compared to their browser-based predecessors. Many of these newer systems now incorporate computer-use capabilities as a feature, alongside a broader suite of agentic functionalities. Consequently, browser agents, when viewed as standalone products, appear increasingly constrained in their utility.

Kian Katanforoosh, CEO of the AI upskilling platform Workera and a lecturer on AI at Stanford University, posits that a primary reason for the lukewarm reception of browser-based computer-use agents lies in their substantial computational demands. Typically, these agents function by capturing a series of screenshots of a webpage, processing this visual data through an AI model, and then executing actions based on their interpretation. This intricate process of information ingestion and analysis can be both slow and, at times, unreliable, leading to user frustration and limited practical application.

"What Claude Code and OpenClaw demonstrated was that it’s actually much more efficient to work with the terminal, because the terminal is text-based and LLMs are text-based," Katanforoosh explained. "It’s probably 10 to 100X less steps to get to the same outcomes." This efficiency gain is attributed to the inherent compatibility between text-based Large Language Models (LLMs) and the text-driven nature of command-line interfaces, significantly reducing processing overhead and latency.

This does not, however, signal an end to innovation in browser agents or computer-use research. Recent developments indicate continued efforts to enhance these capabilities. For instance, the startup Standard Intelligence released a novel computer-use model last month, trained on video data rather than static screenshots. The company claims to have developed a video encoder capable of compressing video streams into an AI model’s context window, achieving a claimed 50-fold increase in efficiency over previous computer-use models. To demonstrate its model’s prowess, Standard Intelligence integrated it with a vehicle, a live video feed, and a computer keyboard. The system was reportedly able to briefly pilot a car autonomously through San Francisco, showcasing the potential for real-time visual understanding and action execution.

The Enduring Role of Graphical Interfaces and the Rise of Hybrid Agents

Despite the growing emphasis on command-line interfaces, the necessity of graphical user interfaces (GUIs) for certain tasks remains a critical consideration. Ang Li, CEO of the computer-use agent startup Simular and a former researcher at Google DeepMind, argues that GUI-based agents will continue to play a vital role in the AI agent ecosystem. "I do see there always being an 80/20 split," Li stated. "You can use the terminal to solve a lot of problems already, but there will always be problems you have to solve in the GUI. For example, if you want to go to a health care insurance website, or some other legacy software, they often don’t have an API that a terminal agent can just call up." This highlights the persistent need for agents that can interact with legacy systems and complex graphical applications that lack programmatic interfaces.

The broader trend within AI laboratories appears to be a strategic shift away from solely focusing on browser agents towards what are often termed "coding agents" or agents with strong coding capabilities. Even for tasks that do not inherently involve writing code, these agents’ ability to interact with applications, modify files, and generate custom software solutions has proven highly beneficial. For example, an AI agent could analyze a user’s bank statements, identify spending patterns, and then programmatically create a personalized budget dashboard.

OpenAI has expressed ambitions for its Codex model to power general-purpose agents within ChatGPT, aiming to replicate and expand upon these capabilities. Anthropic has already taken steps in this direction with Claude Cowork, an extension of its Claude Code offering that aims to operate without requiring users to manually open a terminal. Perplexity, a company that initially placed significant emphasis on browser agents, has also launched a similar product named Personal Computer, indicating a strategic pivot towards more versatile agent functionalities.

While coding agents have gained significant traction among developers, the question of whether these enhanced capabilities will translate into broader adoption among the general public remains open. Companies like Google and OpenAI have posited consumer use cases such as ordering groceries via Instacart or booking dinner reservations. While these applications offer undeniable convenience, widespread consumer adoption may hinge on users’ confidence in the AI agent’s reliability and its ability to avoid costly mistakes in executing such sensitive transactions. The inherent complexity and potential for error in automating everyday tasks may necessitate a more cautious approach from end-users, demanding a high degree of trust in the AI’s competence. The evolution of these agents will likely involve a delicate balance between enhanced functionality and demonstrable trustworthiness.