Nvidia is set to commence its highly anticipated annual GTC developer conference in San Jose, California, next week, with CEO Jensen Huang’s keynote address scheduled for Monday at 11 am PT / 2 pm ET. This event, formally known as the GPU Technology Conference, serves as Nvidia’s premier platform for unveiling pivotal advancements, forging strategic partnerships, and articulating its forward-looking vision for the evolving landscape of computing and artificial intelligence. Huang’s two-hour address is expected to meticulously detail Nvidia’s pivotal role in shaping the future trajectory of AI and high-performance computing, an address that can be attended in person at the SAP Center or accessed globally via livestream on the event’s official website.

The Enduring Significance of GTC as an Industry Barometer

GTC has evolved from a niche gathering for GPU enthusiasts into a critical bellwether for the entire technology industry, particularly for those invested in the burgeoning field of artificial intelligence. Over its history, the conference has been the stage for some of Nvidia’s most impactful announcements, from the introduction of new GPU architectures like Volta, Ampere, and Hopper, to the unveiling of software platforms such as CUDA, which has become the de facto standard for GPU programming. Its transformation mirrors Nvidia’s own journey from a graphics card manufacturer to a dominant force in AI hardware and software, with its GPUs powering everything from scientific supercomputers to the foundational models of generative AI.

The conference’s reputation for unveiling "category-defining" products and strategies draws an increasingly diverse audience each year, encompassing AI researchers, developers, enterprise leaders, investors, and policymakers. Attendees look to GTC not just for product launches but for insights into the technological roadmap that will underpin the next generation of AI innovation. This year’s broader three-day agenda is acutely focused on the next frontiers for AI across a spectrum of industries, including but not limited to healthcare, robotics, and autonomous vehicles, underscoring the pervasive influence of AI in modern society. Analysts and industry observers eagerly await the specifics, particularly concerning how Nvidia plans to navigate intensifying competition and maintain its commanding lead in the AI ecosystem.

Jensen Huang’s Vision: Steering the AI Revolution

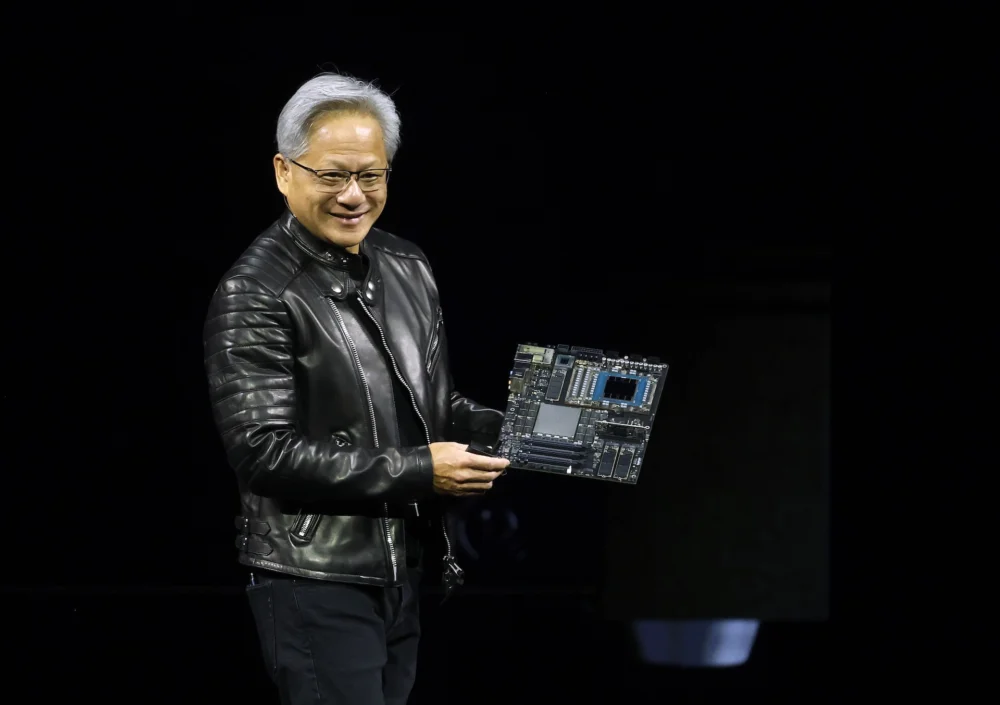

Jensen Huang, Nvidia’s co-founder and CEO, is renowned for his captivating keynotes, which often blend technical depth with an expansive vision for technology’s role in humanity’s future. His presentations are not merely product launches but philosophical discourses on the direction of computing, consistently emphasizing the transformative power of accelerated computing and AI. Huang has often articulated AI as the "new industrial revolution," with Nvidia providing the "AI factories" – the computing infrastructure necessary to fuel this revolution.

His consistent message has been that AI is not just about faster computers, but about entirely new ways of solving complex problems, from drug discovery to climate modeling. At previous GTCs, Huang has painted pictures of "digital twins" replicating entire factories or even cities, and AI agents capable of understanding and interacting with the world in sophisticated ways. The upcoming keynote is anticipated to further elaborate on this overarching vision, likely focusing on how Nvidia’s hardware and software platforms are converging to make these ambitious scenarios a reality. Given the current fervor around generative AI and large language models, it is highly probable that Huang will dedicate significant segments of his address to Nvidia’s continued contributions to these fields, and how they intend to democratize access to advanced AI capabilities for enterprises worldwide.

Anticipated Software Innovations: The Rise of Enterprise AI Agents with NemoClaw

On the software front, significant speculation surrounds the potential release of an open-source platform for enterprise AI agents, reportedly codenamed "NemoClaw." As initially reported by Wired, this platform is poised to provide businesses with a robust, structured framework for developing and deploying AI agents – autonomous software entities designed to execute multi-step tasks. This initiative would strategically position Nvidia to directly compete with, or at least offer an alternative to, similar enterprise AI agent offerings from prominent players like OpenAI, which has also been expanding its toolkit for businesses to build and manage AI agents.

The implications of such a platform are substantial. The global market for enterprise AI solutions is projected to grow from hundreds of billions to over a trillion dollars in the coming years, driven by the increasing need for automation, intelligent decision-making, and enhanced productivity across industries. An open-source framework like NemoClaw could significantly lower the barrier to entry for businesses looking to integrate sophisticated AI agents into their operations, providing a standardized, scalable, and potentially more transparent approach compared to proprietary solutions. For instance, an AI agent could manage complex supply chain logistics, automate customer service interactions with higher contextual understanding, or assist in data analysis across vast datasets, freeing human capital for more strategic tasks.

Nvidia’s foray into an open-source enterprise AI agent platform also signals a broader strategy to entrench its software ecosystem alongside its dominant hardware. By providing the foundational tools for AI development and deployment, Nvidia aims to ensure that its GPUs remain the preferred infrastructure for running these advanced AI applications. This move underscores the understanding that hardware alone, no matter how powerful, is insufficient without a comprehensive and accessible software stack to leverage its capabilities. The open-source nature could foster a vibrant developer community, accelerating innovation and adoption, which would ultimately drive demand for Nvidia’s underlying GPU infrastructure.

Hardware Breakthroughs: The Inference Chip and Market Reconfiguration

Perhaps the most eagerly awaited hardware announcement concerns a new chip specifically engineered to accelerate the AI inference process. As reported by The Wall Street Journal, this chip aims to address one of the most critical bottlenecks in scaling AI applications broadly: the speed and cost of inference. AI inference refers to the process where a trained AI model applies its learned knowledge to generate responses, make predictions, or execute decisions in real-world scenarios. This is distinct from the initial AI training process, which, while computationally intensive, is typically performed once or infrequently. The daily, real-time demand for inference, however, is immense and growing exponentially with every AI-powered application.

Currently, Nvidia commands an estimated 80% market share in the AI training segment, a testament to the unparalleled performance of its high-end GPUs for model development. However, the inference market presents a different competitive landscape, with increasing pressure from custom-built chips developed by tech giants like Google (with its Tensor Processing Units, or TPUs) and Amazon (with its Inferentia and Trainium chips), as well as startups focusing exclusively on inference optimization. These custom solutions often aim to offer a more cost-effective and energy-efficient way to run inference workloads at scale.

Nvidia’s rumored inference-focused chip would represent a strategic maneuver to not only defend but also expand its dominance into this crucial and rapidly growing segment. By offering faster and cheaper inference capabilities, Nvidia could unlock new possibilities for deploying AI across a wider array of applications, from embedded systems and edge devices to massive data centers. This would have significant implications for the cost of running AI services, making AI more accessible and ubiquitous. For developers and enterprises, a more efficient inference chip means lower operational costs for their AI models and the ability to serve more users with lower latency, thereby accelerating the deployment of AI-driven products and services across various industries.

The Groq Enigma: A Strategic Alliance Under Scrutiny

Adding another layer of intrigue to the conference is the anticipated clarification regarding Nvidia’s relationship with Groq, an inference-focused company. Kevin Cook, a senior equity strategist at Zacks Investment Research, highlighted to TechCrunch the considerable curiosity surrounding this tie-up. Reports late last year indicated that Nvidia paid approximately $20 billion to license Groq’s technology, a move that raised eyebrows given Groq’s unique approach to inference acceleration through its Language Processing Unit (LPU) architecture.

The Groq team, including founder Jonathan Ross and President Sunny Madra, reportedly agreed to join Nvidia to further advance and scale the licensed technology. This arrangement suggests a deeper integration than a mere licensing deal, potentially indicating a strategic acquisition of talent and intellectual property. Groq has garnered attention for its ability to deliver exceptionally low-latency inference, particularly for large language models, a capability that could be a game-changer in applications requiring real-time AI responses.

The strategic rationale for Nvidia’s substantial investment and integration of Groq’s expertise is multifaceted. It could be seen as a defensive play to mitigate emerging competition in the inference space, acquiring a promising technology that could complement or enhance Nvidia’s own inference offerings. Alternatively, it could represent an offensive move to push the boundaries of AI performance, leveraging Groq’s specialized LPU architecture to achieve unprecedented speeds for specific inference workloads, particularly those related to generative AI and real-time conversational AI. Attendees and analysts will be keen to learn how Nvidia plans to integrate Groq’s technology into its existing product lines, whether it will lead to new specialized hardware, or how it will inform future software optimizations within Nvidia’s ecosystem. This partnership could redefine Nvidia’s inference strategy and further solidify its position as a holistic AI solutions provider.

Broader Industry Impact and Thematic Tracks

Beyond the headline-grabbing product and partnership announcements, the three-day GTC event will delve into the profound impact of AI across diverse sectors. Dedicated tracks and demonstrations will showcase Nvidia’s AI capabilities and ongoing innovations in healthcare, robotics, and autonomous vehicles, among other critical industries.

In healthcare, AI is revolutionizing drug discovery, medical imaging analysis, and personalized treatment plans. Nvidia’s Clara platform, for instance, provides a unified framework for AI-powered healthcare and life sciences, from genomic sequencing to surgical robotics. GTC will likely feature new collaborations with pharmaceutical companies, research institutions, and medical device manufacturers, highlighting advancements in areas like AI-driven diagnostics or accelerated drug development using simulation.

Robotics, another major focus, is being transformed by AI-powered perception, navigation, and manipulation capabilities. Nvidia’s Isaac robotics platform, combined with its Omniverse simulation tools, enables developers to train, test, and deploy AI robots in virtual environments before physical deployment. Expectations are high for demonstrations of more agile, intelligent robots capable of performing complex tasks in unstructured environments, from industrial automation to logistics and even domestic applications.

Autonomous vehicles remain a cornerstone of Nvidia’s long-term vision. The company’s Drive platform continues to evolve, providing the full software and hardware stack for self-driving cars. GTC often features updates on partnerships with leading automotive manufacturers and advancements in AI perception, planning, and simulation for autonomous driving. Further developments in sensor fusion, real-time decision-making, and safety validation for autonomous systems are anticipated, illustrating Nvidia’s commitment to bringing safe and scalable self-driving technology to market. These sector-specific deep dives not only demonstrate the practical applications of Nvidia’s technology but also foster collaboration and accelerate innovation within these vital industries.

Nvidia’s Enduring Dominance and Future Outlook

Nvidia’s trajectory over the past decade has been nothing short of meteoric, driven by its strategic foresight in betting big on GPUs as the engines of the AI revolution. From its roots in gaming graphics, the company skillfully pivoted to become the indispensable infrastructure provider for the global AI ecosystem. Its market capitalization has soared, reflecting investor confidence in its continued leadership and the foundational nature of its technology.

The upcoming GTC conference is poised to reinforce Nvidia’s position not just as a hardware vendor but as a full-stack AI platform provider. By simultaneously advancing its chip technology, expanding its software ecosystem, and fostering strategic partnerships, Nvidia aims to create a virtuous cycle that entrenches its solutions across the entire AI value chain. The implications of the announcements at GTC will resonate far beyond the developer community, influencing the pace of AI innovation, the competitive landscape for tech giants, and the operational efficiencies of countless enterprises worldwide. As AI continues its rapid ascent, GTC serves as a crucial annual checkpoint, offering a glimpse into the innovations that will define the next chapter of technological progress, firmly placing Nvidia at the helm of this transformative era.