Meta Platforms Inc. announced on Wednesday its strategic acceleration in the domain of artificial intelligence hardware with the unveiling of four new custom-designed computer chips. These advanced semiconductors, designated as part of the Meta Training and Inference Accelerators (MTIA) line, are engineered to bolster the company’s burgeoning generative AI capabilities and refine the complex content ranking systems that govern user experiences across its vast social media empire, including Facebook and Instagram. This significant hardware initiative underscores Meta’s escalating commitment to in-house chip development as a critical pillar for maintaining its competitive edge in the rapidly evolving AI landscape.

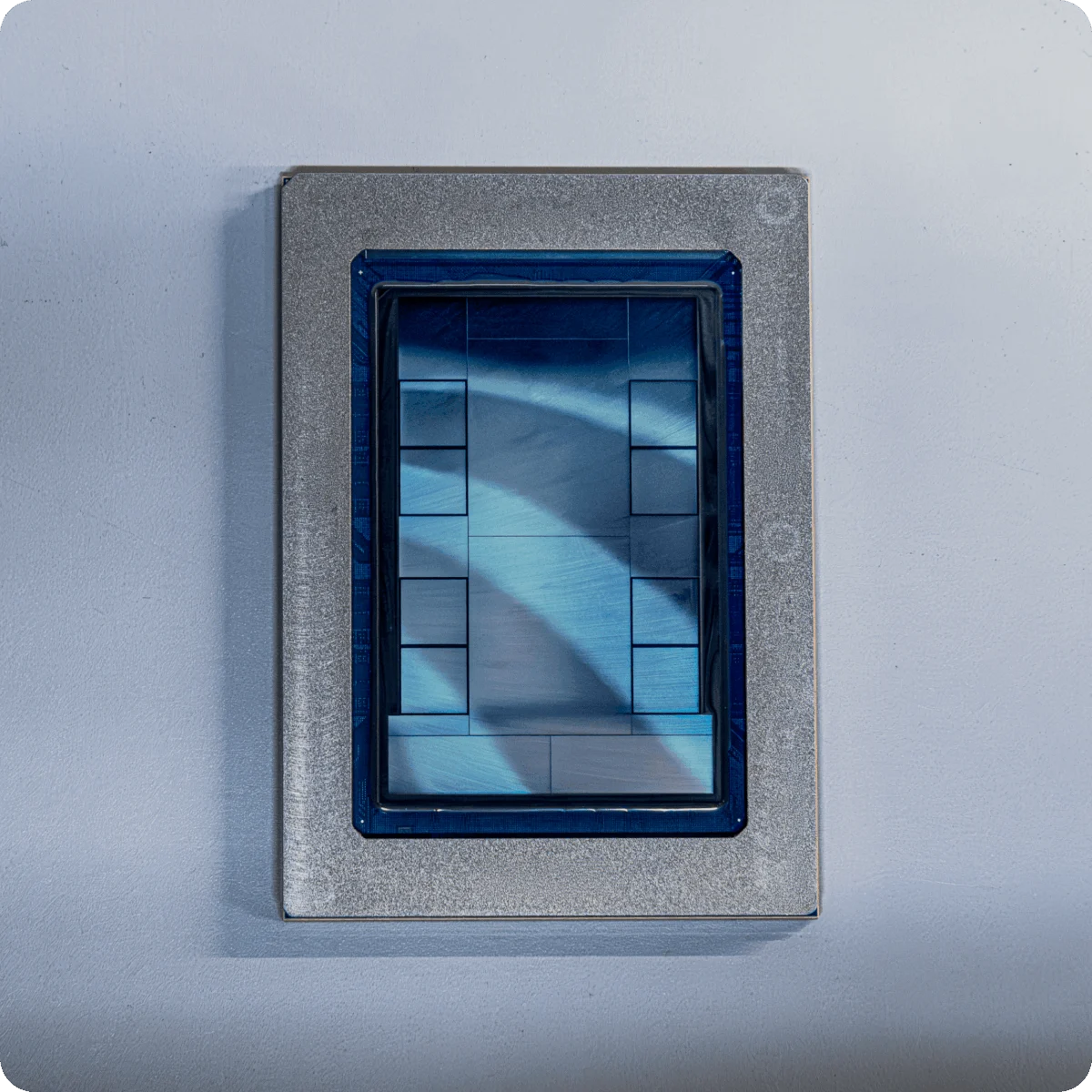

The development of these latest MTIA chips represents a significant leap forward for Meta’s hardware engineering efforts. The company has forged a strategic partnership with Broadcom, a leading semiconductor manufacturer, to bring these chips to fruition. A foundational element of this new silicon is its adherence to the open-source RISC-V architecture. This choice signifies a move towards greater architectural flexibility and collaboration within the industry, potentially fostering innovation and reducing reliance on proprietary instruction sets. The actual fabrication of these cutting-edge chips is being undertaken by Taiwan Semiconductor Manufacturing Corporation (TSMC), globally recognized as the world’s preeminent chip producer, renowned for its advanced manufacturing processes and substantial production capacity.

Among the newly announced silicon, the MTIA 300 chip is already in active production, signaling immediate deployment within Meta’s infrastructure. The remaining three chips – the MTIA 400, MTIA 450, and MTIA 500 – are projected to enter production and ship between early and late 2027. The rapid pace of this hardware development cycle is noteworthy within the semiconductor industry, where product lifecycles are typically measured in years. For a social media company, historically not a primary manufacturer of physical computing infrastructure, this accelerated timeline is particularly remarkable and speaks to the urgency and strategic importance Meta places on owning its AI hardware.

An Iterative Approach to AI Hardware Evolution

YJ Song, a Vice President of Engineering at Meta, articulated the company’s rationale behind this ambitious development cadence. He explained that the accelerating pace of AI model evolution is outstripping traditional chip development timelines. This disparity means that by the time conventional hardware reaches mass production, the AI workloads it was designed for may have already undergone substantial transformation.

"Rather than placing a bet and waiting for a long period of time, we deliberately take an iterative approach," Song stated in a company blog post. "Each MTIA generation builds on the last, using modular chiplets and incorporating the latest AI workload insights and hardware technologies." This modular chiplet design allows for greater flexibility and faster iteration, enabling Meta to integrate new advancements and adapt to emerging AI trends more swiftly than with monolithic chip designs. This approach mitigates the risk of obsolescence and ensures that Meta’s hardware remains aligned with the cutting edge of AI research and deployment.

The MTIA 300 is primarily designated for training algorithms that are crucial for ranking and recommending content to the hundreds of millions of users who engage with Meta’s platforms daily. This includes the personalized feeds on Facebook and the content discovery mechanisms within Instagram Reels. The effective functioning of these systems relies heavily on powerful and efficient training hardware capable of processing vast datasets.

The other three chips in the new lineup – MTIA 400, 450, and 500 – are specifically designed to accelerate "inference." Inference is the critical process of running trained AI models to generate real-time outputs, such as generating text, creating images, or making predictions. As generative AI applications become more sophisticated and widely deployed, the demand for efficient and scalable inference hardware will continue to surge.

Performance Benchmarks and Future Capabilities

Meta has provided initial performance projections for its upcoming inference chips, indicating a strong competitive stance. The MTIA 400, currently undergoing testing, is expected to deliver performance "competitive with leading commercial products." Its deployment to Meta’s data centers is anticipated in the near future. This claim suggests that Meta is aiming to match or exceed the capabilities of specialized AI accelerators from established industry players.

The MTIA 450, scheduled to ship in early 2027, will feature a significant enhancement: double the amount of high-bandwidth memory (HBM) compared to the MTIA 400. Increased memory capacity is vital for handling larger AI models and more complex datasets, enabling faster and more efficient inference.

Further pushing the boundaries, the MTIA 500, slated for release later in 2027, will boast even greater memory capacity than the MTIA 450. Critically, it will also incorporate "innovations in low-precision data." This suggests advancements in techniques that allow AI models to operate with reduced numerical precision, which can lead to substantial gains in performance and power efficiency without a significant degradation in accuracy. These optimizations are crucial for scaling AI deployments to meet the demands of Meta’s global user base.

A Broader Strategy for AI Dominance

The development of the MTIA chip line is a cornerstone of Meta’s overarching strategy to amass significant computing power, a necessary prerequisite for developing and deploying cutting-edge artificial intelligence. The company first publicly detailed its ambitions in custom chip development in 2023 with the release of its initial MTIA product. This move aligns with a broader industry trend where major software companies and AI research labs are increasingly investing in bespoke hardware solutions to cater to their unique AI workloads.

OpenAI, a leading AI research organization, has also announced a strategic collaboration with Broadcom to develop custom accelerators, mirroring Meta’s approach in partnering with established semiconductor giants. This trend signifies a shift in the AI hardware ecosystem, where companies are no longer solely reliant on off-the-shelf solutions but are actively shaping the silicon that powers their AI innovations.

Navigating the Complexities of Custom Silicon

Despite this ambitious roadmap, Meta’s journey into custom chip design has not been without its challenges. Earlier this year, reports emerged suggesting that Meta might be scaling back some of its most ambitious in-house efforts to create high-end chips that would directly challenge industry leaders like Nvidia. The announcement of the new MTIA roadmap appears, in part, to be a strategic move to counter such narratives and reaffirm Meta’s commitment to its custom silicon strategy.

However, the creation of custom silicon remains an enormously expensive and technically intricate undertaking. The research, development, design, verification, and manufacturing processes demand substantial capital investment and specialized expertise. Consequently, Meta is expected to continue relying heavily on purchasing AI hardware from external vendors, at least in the immediate future, to meet its vast and growing computing needs.

This reality is evident in Meta’s recent surge in chip acquisitions. The unveiling of the new MTIA chips follows closely on the heels of multi-billion-dollar deals with Nvidia and AMD, two of the leading providers of AI-accelerating hardware. Furthermore, Meta has also secured an agreement to rent chips manufactured by Google, further diversifying its hardware acquisition strategy. This dual approach – developing custom silicon while also strategically procuring from external partners – allows Meta to maintain flexibility and access the latest advancements while simultaneously investing in its long-term hardware independence.

Implications for the AI Landscape

Meta’s aggressive push into custom AI chip development has several significant implications for the broader technology industry. Firstly, it signals a deepening commitment by major tech players to vertical integration in AI, aiming to optimize hardware for their specific software needs and gain a competitive advantage. This can lead to more efficient and cost-effective AI deployments.

Secondly, the adoption of open-source architectures like RISC-V by a company of Meta’s stature could accelerate the adoption and development of this alternative to established proprietary instruction sets. This could foster greater competition and innovation within the chip design ecosystem.

Thirdly, Meta’s iterative chip development approach, focused on modularity and rapid adaptation, may set a new standard for how AI hardware is designed and evolved. This could allow for quicker responses to the fast-changing demands of AI research and applications.

Finally, the substantial investments in custom silicon by companies like Meta and OpenAI highlight the critical role of specialized hardware in unlocking the full potential of advanced AI. As AI models continue to grow in complexity and scale, the demand for tailored computing power will only intensify, driving further innovation and competition in the semiconductor industry. Meta’s new MTIA roadmap is a clear indicator that the company is positioning itself to be a significant player in shaping the future of AI hardware.