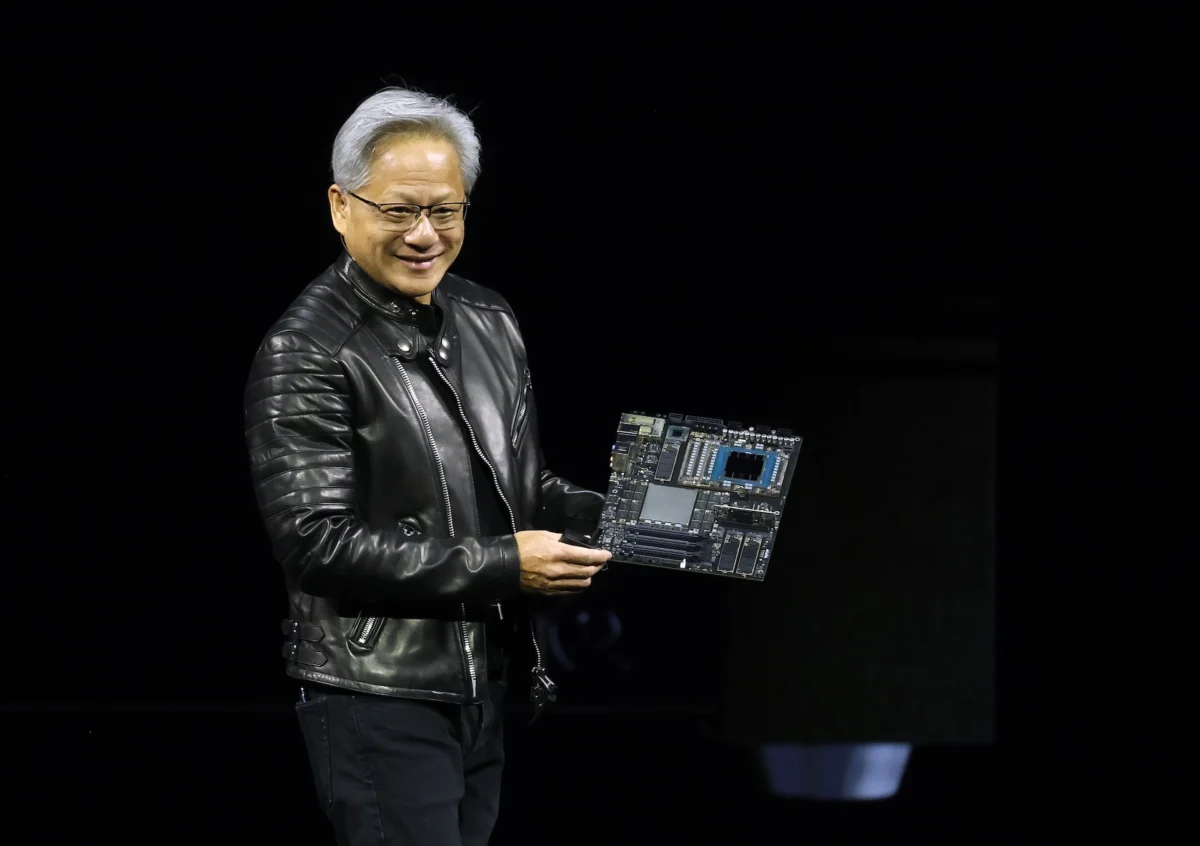

The highly anticipated annual GTC developer conference, Nvidia’s flagship event, commenced on Monday, March 18, 2024, in San Jose, California, drawing global attention to the future of artificial intelligence and advanced computing. CEO Jensen Huang’s keynote address, a pivotal two-hour presentation scheduled for 11 a.m. PT / 2 p.m. ET, is poised to unveil the company’s strategic roadmap, introduce groundbreaking products, and underscore its vision for the pervasive integration of AI across industries. This year’s conference, officially running from March 18 to March 21, marks a critical juncture for Nvidia as it navigates an intensely competitive yet rapidly expanding AI market, where its technologies are increasingly indispensable.

A Deep Dive into GTC’s Significance and Evolution

Historically known as the GPU Technology Conference, GTC has evolved far beyond its initial focus on graphics processing units to become the preeminent global gathering for innovators, researchers, and developers in artificial intelligence. From its inception, GTC served as a platform for Nvidia to showcase the versatility of its GPUs, initially designed for gaming and professional visualization, but soon recognized for their unparalleled parallel processing capabilities essential for scientific computing and, crucially, AI. Over the past decade, as AI transitioned from academic research to mainstream application, GTC has mirrored this shift, becoming a bellwether for the industry’s direction. Attendees, whether in person at the SAP Center or via the global livestream, anticipate not just product announcements but also profound insights into the technological frontiers that Nvidia is actively shaping. The conference agenda is meticulously curated to cover a vast spectrum of AI applications, from foundational research in large language models to practical deployments in healthcare, robotics, autonomous vehicles, and industrial automation. This multidisciplinary approach underscores Nvidia’s ambition to be the foundational computing platform for virtually every sector touched by AI.

Jensen Huang’s Keynote: A Glimpse into the Future of Computing

Central to the GTC experience is Jensen Huang’s keynote. Known for his charismatic presentations and often dramatic reveals, Huang typically uses this stage to articulate Nvidia’s overarching philosophy and strategic direction. This year, the focus is expected to heavily lean into Nvidia’s expanding role in democratizing and accelerating AI. Industry observers anticipate a comprehensive address that not only celebrates Nvidia’s current achievements but also lays out an ambitious blueprint for future innovation. Topics likely to be covered include advancements in GPU architectures, new software frameworks, and strategic partnerships that collectively aim to overcome the lingering challenges in scaling AI applications globally. His pronouncements often set the tone for the entire technology sector, influencing investment, research, and development trajectories for years to come.

Anticipated Hardware Innovations: Redefining AI Inference

One of the most keenly awaited announcements on the hardware front revolves around a rumored new chip specifically engineered to accelerate the AI inference process. While Nvidia currently commands an estimated 80% market share in the AI training segment—where models are initially developed and taught from vast datasets, requiring immense computational power—the inference market presents a distinct and rapidly growing opportunity. AI inference refers to the phase where a trained AI model applies its learned knowledge to generate responses or make real-time decisions. This process, though less computationally intensive than training, demands speed, efficiency, and cost-effectiveness for widespread deployment.

The development of a specialized inference chip signals Nvidia’s aggressive push to dominate this critical market segment. Faster and more economical inference is widely regarded as a key bottleneck to the broad scaling of AI applications across various industries, from enabling instant responses in generative AI chatbots to facilitating real-time object recognition in autonomous systems. By offering a solution optimized for inference, Nvidia aims to extend its market leadership beyond the data center, empowering enterprises to deploy AI models with unprecedented agility and efficiency at the "edge" and within diverse computing environments. This strategic move also positions Nvidia to counter intensifying competition from tech giants like Google and Amazon, which are increasingly developing custom chips (such as Google’s TPUs and Amazon’s Inferentia) for their own inference workloads, and from emerging startups focused solely on inference acceleration. Analysts suggest that a highly optimized inference chip from Nvidia could significantly lower the operational costs of deploying AI, thereby catalyzing a new wave of AI adoption and innovation.

Software Frontiers: The Potential Debut of NemoClaw

Beyond hardware, Nvidia’s software ecosystem is equally crucial to its market dominance. Rumors abound regarding the potential release of an open-source platform for enterprise AI agents, reportedly dubbed "NemoClaw." As initially reported by Wired, this platform could offer businesses a structured, comprehensive framework for building, deploying, and managing AI agents—sophisticated software entities capable of autonomously executing multi-step tasks. The strategic implications of NemoClaw are substantial. In an era where AI agents are becoming increasingly sophisticated, capable of automating complex workflows and interacting intelligently with users and other systems, providing an open-source platform positions Nvidia as a central facilitator for enterprise AI development.

NemoClaw would enable businesses to develop custom agents tailored to their specific operational needs, from automating customer service interactions to streamlining data analysis or orchestrating complex supply chain logistics. By offering an open-source solution, Nvidia could foster a vibrant developer community, encouraging widespread adoption and innovation built atop its foundational technologies. This move would also strategically position Nvidia to mirror and potentially compete with similar enterprise AI agent offerings from companies like OpenAI, which are increasingly focused on providing tools for businesses to leverage advanced AI capabilities. The success of such a platform would not only deepen Nvidia’s integration into enterprise IT infrastructure but also create a synergistic relationship with its hardware offerings, ensuring that its powerful GPUs are the preferred engines for running these advanced AI agents.

Strategic Partnerships and Industry-Specific Demonstrations

GTC is not merely a stage for product launches; it is also a nexus for collaboration. The conference is expected to feature a diverse array of partnership announcements and live demonstrations showcasing Nvidia’s AI capabilities across a myriad of industries. These demonstrations are vital in illustrating the tangible impact of Nvidia’s technologies. In healthcare, advancements in AI-powered diagnostics, drug discovery, and personalized medicine, often accelerated by Nvidia platforms like Clara, are anticipated. For robotics, the conference will likely highlight progress in autonomous navigation, human-robot interaction, and industrial automation, leveraging Nvidia’s Isaac robotics platform. The autonomous vehicles sector, a long-standing focus for Nvidia through its Drive platform, will undoubtedly showcase breakthroughs in self-driving technology, sensor fusion, and safety systems. These real-world applications underscore Nvidia’s commitment to translating cutting-edge research into practical, transformative solutions. Each partnership and demonstration serves as a testament to the versatility and power of Nvidia’s full-stack approach, encompassing hardware, software, and services tailored for specific industry needs.

The Groq Enigma: A Potential Game-Changer in Inference

One of the most intriguing rumors swirling around GTC involves Nvidia’s reported relationship with Groq, an inference-focused AI chip challenger. Kevin Cook, a senior equity strategist at Zacks Investment Research, highlighted the significant curiosity surrounding this tie-up. Reports from late last year suggested that Nvidia reportedly paid a staggering $20 billion to license Groq’s technology, with Groq’s founder Jonathan Ross, president Sunny Madra, and other key team members agreeing to join Nvidia to advance and scale the licensed innovations.

If true, this acquisition or licensing deal could be a monumental strategic move for Nvidia, particularly in the competitive inference market. Groq has garnered significant attention for its innovative Language Processor Unit (LPU) architecture, which reportedly offers unparalleled speed and efficiency for large language model inference. Integrating Groq’s technology could provide Nvidia with a substantial competitive edge, allowing it to offer an even broader and more optimized portfolio of inference solutions. The reported sum and the absorption of key personnel suggest a deep strategic imperative to leverage Groq’s unique intellectual property and engineering talent. This potential collaboration would reinforce Nvidia’s commitment to securing its leadership across all facets of the AI compute stack, from the most demanding training tasks to the highly latency-sensitive inference applications that power real-time AI experiences. It also highlights Nvidia’s willingness to invest heavily and strategically to maintain its technological advantage in a rapidly evolving landscape.

Nvidia’s Strategic Positioning and Market Dominance

Nvidia’s journey from a graphics card manufacturer to the undisputed leader in AI computing is a testament to its foresight and relentless innovation. The company’s market capitalization has soared, reflecting its pivotal role in the ongoing AI revolution. Its CUDA platform, a parallel computing platform and programming model, has become the de facto standard for AI development, creating a powerful ecosystem lock-in that is difficult for competitors to penetrate. By offering a comprehensive stack of hardware (GPUs, DPUs), software (CUDA, cuDNN, TensorRT), and services, Nvidia has cultivated an ecosystem that makes it the preferred partner for AI researchers and enterprises alike.

The announcements at GTC 2024 are expected to further solidify this position. By addressing both the training and inference markets with specialized solutions, Nvidia aims to capture an even larger share of the burgeoning AI economy. The company’s strategic investments in research and development, coupled with its aggressive pursuit of partnerships and potential acquisitions, demonstrate a clear intent to remain at the forefront of AI innovation. The broader impact of GTC extends beyond Nvidia itself, influencing the entire semiconductor industry, the trajectory of AI development, and the competitive landscape. As AI continues to permeate every aspect of technology and society, Nvidia’s role as the engine powering this transformation becomes ever more critical, making GTC a must-watch event for anyone invested in the future of computing.

Broader Implications and Future Outlook

The implications of GTC 2024’s expected announcements are far-reaching. For the semiconductor industry, Nvidia’s innovations could set new benchmarks for performance and efficiency in AI processing, potentially forcing competitors to accelerate their own development cycles. For the broader AI community, faster, cheaper, and more accessible inference capabilities, coupled with robust open-source platforms for AI agents, could significantly democratize AI development and deployment. Startups and smaller enterprises, previously constrained by the high cost and complexity of AI infrastructure, might find new avenues for innovation.

Economically, Nvidia’s continued growth and dominance contribute significantly to the tech sector’s overall health, attracting substantial investment and fostering job creation in high-tech fields. The company’s strategic moves, particularly in expanding its software ecosystem and addressing the inference bottleneck, are crucial for advancing the commercial viability and widespread adoption of AI across all sectors. As AI models become more complex and their applications more diverse, the demand for powerful, efficient, and versatile computing platforms will only intensify. Nvidia, through events like GTC, continues to position itself as the indispensable architect of this intelligent future, navigating the technological landscape with a blend of innovation, strategic vision, and market acumen. The world watches GTC not just to see what Nvidia will do, but to glimpse the future of AI itself.